TL;DR: Making AI text harder to detect takes more than running it through one undetectable ai text humanizer. The most reliable process is a combination of a humanizer, deliberate manual edits that change rhythm and voice, and verification across multiple detectors because results vary by platform, including tests where GPTZero improved from 100% AI-generated to 91% AI-generated after humanization while Originality.ai still marked the text with 100% confidence as AI.

You probably have a draft that says the right things but still feels off. It reads clean, maybe even polished, yet detectors flag it and real readers can still sense the pattern.

That happens because most AI output fails in the same places. The wording is too predictable, the sentence rhythm is too even, and the voice sounds like nobody in particular.

A practical workflow fixes that. Start with a decent draft, run it through a humanizer, edit the parts that still sound synthetic, then check the final version across more than one detector before you publish or submit it.

Understanding the Telltale Signs of AI Writing

The reason AI text gets flagged isn't just that it sounds "robotic." Detectors look for patterns, and readers notice many of the same ones without using technical language.

One clue is predictable word choice. AI often reaches for safe transitions, tidy summaries, and balanced phrases. You see the same types of openings again and again: "In conclusion," "It is important to note," and similar filler.

Another clue is uniform sentence rhythm. Humans vary pacing naturally. We write some short sentences. Then a longer one with an aside, a correction, or a change in direction. AI often produces a smoother, flatter cadence.

Predictability is easy to spot

Here is an AI-ish sentence:

Businesses can benefit from using artificial intelligence because it improves efficiency, enhances productivity, and supports better decision-making.

Nothing is technically wrong with it. The problem is that it could belong to almost any article on almost any site.

A more human version might look like this:

AI helps with the boring parts first. That matters more than the buzzwords, especially if your team spends half the week rewriting the same briefs, reports, or product copy.

The second version is more specific. It sounds like someone has seen the problem in practice.

Detectors also notice low variation

Think about how people write when they care about a topic. They interrupt themselves. They sharpen a point. They use a sentence fragment once in a while. They change tone depending on what comes next.

AI tends to iron all of that out.

A paragraph with low variation often has:

- Even sentence length that makes every line feel mechanically balanced

- Transitional phrases that are generic or common, employed with unnatural neatness

- Abstract nouns instead of lived detail, such as "efficiency" or "innovation" without examples

- No personal stance so the passage sounds neutral in a way humans rarely are

Voice is usually the missing ingredient

Most raw AI text has information, but not perspective. It doesn't sound like a student under deadline pressure, a strategist rewriting landing pages, or a founder trying to explain a product clearly. It sounds like a model predicting the next likely sentence.

That lack of voice matters because human writing usually leaves fingerprints. Small ones, but they're there.

Practical rule: If a paragraph could be dropped into ten different websites without changing a word, it's probably too generic to feel convincingly human.

This is one reason the category keeps growing. Undetectable AI says its platform has over 23 million users humanizing AI-generated text for work, school, and online publishing, which shows how common this problem has become as detection tools spread across classrooms, publishing workflows, and professional review processes (Undetectable AI humanizer page).

A quick diagnosis checklist

Before you humanize anything, read the draft and look for these signals:

| Pattern | What it looks like | Why it gets flagged |

|---|---|---|

| Predictable phrasing | Safe, familiar wording with no surprises | The language pattern looks statistically regular |

| Flat burstiness | Sentences all feel roughly the same size | Human writing usually has more rhythm shifts |

| Generic framing | Broad claims without concrete detail | The text feels assembled, not observed |

| No personal texture | No opinion, memory, hesitation, or emphasis | Readers can't sense a real author behind it |

When you understand those patterns, humanizing gets easier. You stop making random edits and start changing the exact features that make AI text look synthetic in the first place.

A Reliable Workflow to Make AI Text Undetectable

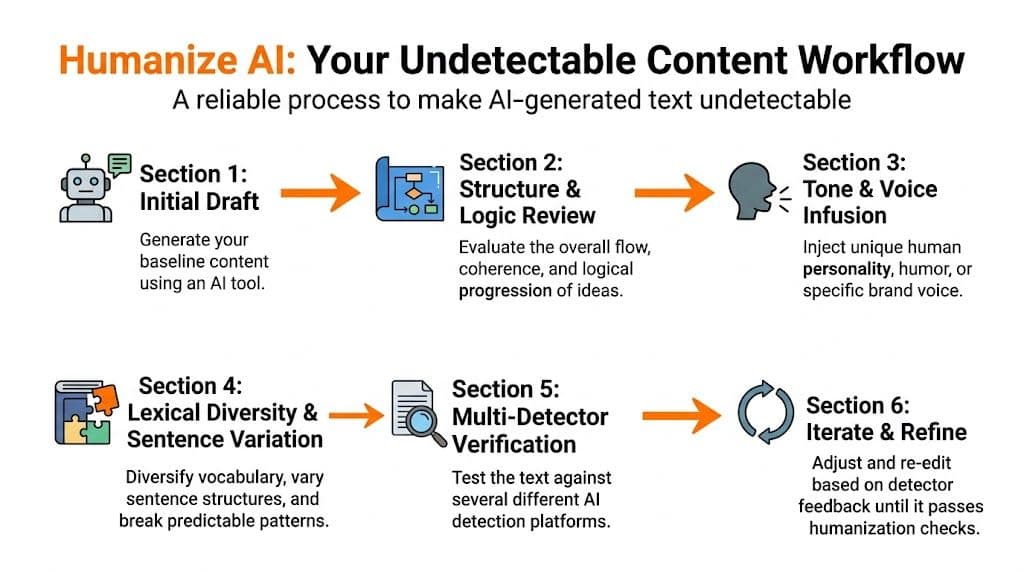

The best undetectable ai text humanizer workflow isn't a one-click trick. It's a repeatable process with three stages: build a solid draft, rewrite for human texture, then verify and refine.

Independent testing methods for tools in this category follow a similar sequence: generate a baseline draft, process it through a humanizer, retest it in detectors such as GPTZero, ZeroGPT, Originality.ai, Turnitin, and Writer, then judge both score changes and whether meaning and readability survived (testing methodology for AI humanizers).

Stage one starts before the humanizer

If your draft is bloated, vague, or overexplained, a humanizer won't rescue it cleanly. It will just rewrite weak material into slightly less weak material.

Start with a draft that already has:

- A clear argument so the text isn't just circling the topic

- Specific examples instead of broad filler

- Natural section logic where each paragraph has a job

- A defined audience because tone depends on who the reader is

A generation tool offers assistance, but restraint matters. If you're creating from scratch, an AI writer is useful for outlines and first drafts. Just don't ask it to produce the polished final version and expect detector-safe output.

Stage two is where humanization actually happens

Run the draft through one dedicated humanizer, not three. Stacking multiple rewriting tools usually creates awkward synonyms, broken meaning, and a weirdly overprocessed voice.

A practical setup is to use one humanizer to break the obvious patterns, then edit by hand. If you want to compare approaches, this Undetectable AI review gives a useful frame for what these tools tend to improve and where they still fall short.

If you're choosing a tool in your own workflow, one option is Lumi Humanizer, which is built for turning AI-generated text into more natural prose while preserving the original meaning. Use it as a rewriting step, not as your final quality check.

What to change after the tool runs

Don't just skim for grammar. Read for pattern breaks.

I use a simple pass built around four checks:

-

Sentence shape

Split one long sentence. Combine two short ones. Start one paragraph with a blunt sentence instead of a transition.

-

Word choice

Replace generic phrases with words you would say in context. "Utilize strategic opportunities" becomes "use the opening while it's there."

-

Voice markers

Add a mild opinion, a specific detail, or a concrete constraint. Even one line like "This usually breaks on technical copy" can make a paragraph feel authored.

-

Cleanup

Humanizers sometimes overcorrect. If a sentence suddenly uses an odd synonym or shifts the meaning, fix it immediately.

A humanizer should remove machine patterns, not remove your point.

Stage three is revision by intent

Many individuals stop too early. They get a cleaner draft and assume they're done.

Instead, do one focused revision for each of these:

Logic

Check whether the paragraph still says what you meant. Humanizers can soften claims, shuffle emphasis, or flatten nuance.

Tone

Read it aloud. If it sounds like a polished customer support macro, keep editing.

Authenticity

Ask one question: would a person who knows this topic phrase it this way? If not, rewrite the sentence, even if it looks "good."

A useful support step here is a paraphrase tool when one passage still feels stiff but doesn't need full re-humanization. That's different from humanizing the whole draft. You're fixing a local phrasing problem, not trying to mask the source of the text.

A workable example

Say the original AI draft says:

Remote work has transformed the modern workplace by increasing flexibility, improving employee satisfaction, and enabling organizations to access a wider talent pool.

After a humanizer pass, it might become:

Remote work has changed how companies operate, giving employees more flexibility and helping businesses hire from a broader range of locations.

Better, but still generic.

A more convincing final version would be:

Remote work changed hiring first, culture second. Teams liked the flexibility, sure, but the bigger shift was access. A company that used to recruit in one city can now hire where the skill actually is.

That final version does three things well. It introduces contrast, varies sentence length, and adds a point of view.

Advanced Edits to Add Authentic Human Voice

Tool output often gets you from obvious AI to polished neutrality. That's progress, but polished neutrality still isn't a real voice.

The final lift usually comes from manual edits that feel small in isolation. Together, they make the writing sound authored instead of processed.

Start with one flat paragraph

Take this version:

Email marketing remains an effective strategy for businesses because it enables direct communication with customers, improves brand visibility, and supports conversion goals. Companies should create personalized messages, maintain consistent outreach, and analyze campaign performance regularly.

That's the kind of paragraph detectors often dislike and readers forget immediately.

Now revise it with intent:

Email still works, but not because it's magical. It works when the message sounds like it came from someone who knows the reader's problem. Most teams don't fail on send frequency. They fail when every campaign sounds polished, cautious, and interchangeable.

The information is similar. The experience of reading it is not.

Four edits that usually matter most

Add contrast instead of stacking benefits

AI loves list-like balance. Humans often write through tension.

Instead of this:

The tool saves time, improves quality, and increases consistency.

Try this:

It saves time, yes, but the bigger win is consistency. Fast drafts aren't rare anymore. Usable drafts are.

That small contrast creates a human thought pattern. It feels judged, not merely generated.

Use selective specificity

You don't need a dramatic personal anecdote in every paragraph. One grounded detail is enough.

Examples of small details that help:

- A real constraint like "client copy has less room for experimentation"

- A real setting like "in a semester paper" or "on a product page"

- A real judgment like "this sounds too polished for a founder-led brand"

These details don't need numbers to work. They need plausibility.

Break the rhythm on purpose

If every sentence is neat, the paragraph feels manufactured.

Use a short sentence after a long one. Use a fragment occasionally. Ask a rhetorical question when it fits.

For example:

The draft was technically clean. That was the problem.

Or:

You can keep the structure. But why keep the lifeless phrasing?

Those interruptions create pace. Pace creates texture.

Here's a useful walkthrough before the next step:

Leave tiny imperfections where they sound natural

This part gets misunderstood. You are not trying to make the writing sloppy.

You are allowing the writing to sound less machine-balanced. A sentence can start with "But" if that's how the thought lands. A contraction can stay. A line can be slightly punchy rather than academically symmetrical.

Editing cue: If every sentence looks equally polished, polish is now the problem.

A before and after comparison

Here is a cleaner side-by-side example.

Before

Students can use AI tools to improve their writing process by generating ideas, organizing their thoughts, and producing drafts in a timely manner. However, they should ensure that the final content remains original, clear, and appropriate for submission requirements.

After

AI can help a student get unstuck fast. Brainstorming, rough structure, first draft, all useful. The risk starts later, when the draft still sounds generic and the student mistakes completion for readiness.

Why the second version works better:

- It sounds like advice from someone who has seen the mistake.

- The sentence lengths shift.

- The wording is simpler, but the point is sharper.

- "Completion for readiness" is a human judgment, not a template phrase.

One last pass catches the obvious misses

Before moving to detectors, run the text through a grammar checker. Not to sterilize the writing. Just to catch accidental errors introduced during rewrites, especially when a humanizer swaps syntax and leaves behind awkward agreement or punctuation.

That final pass should preserve voice, not flatten it.

Verifying Your Work Against AI Detectors

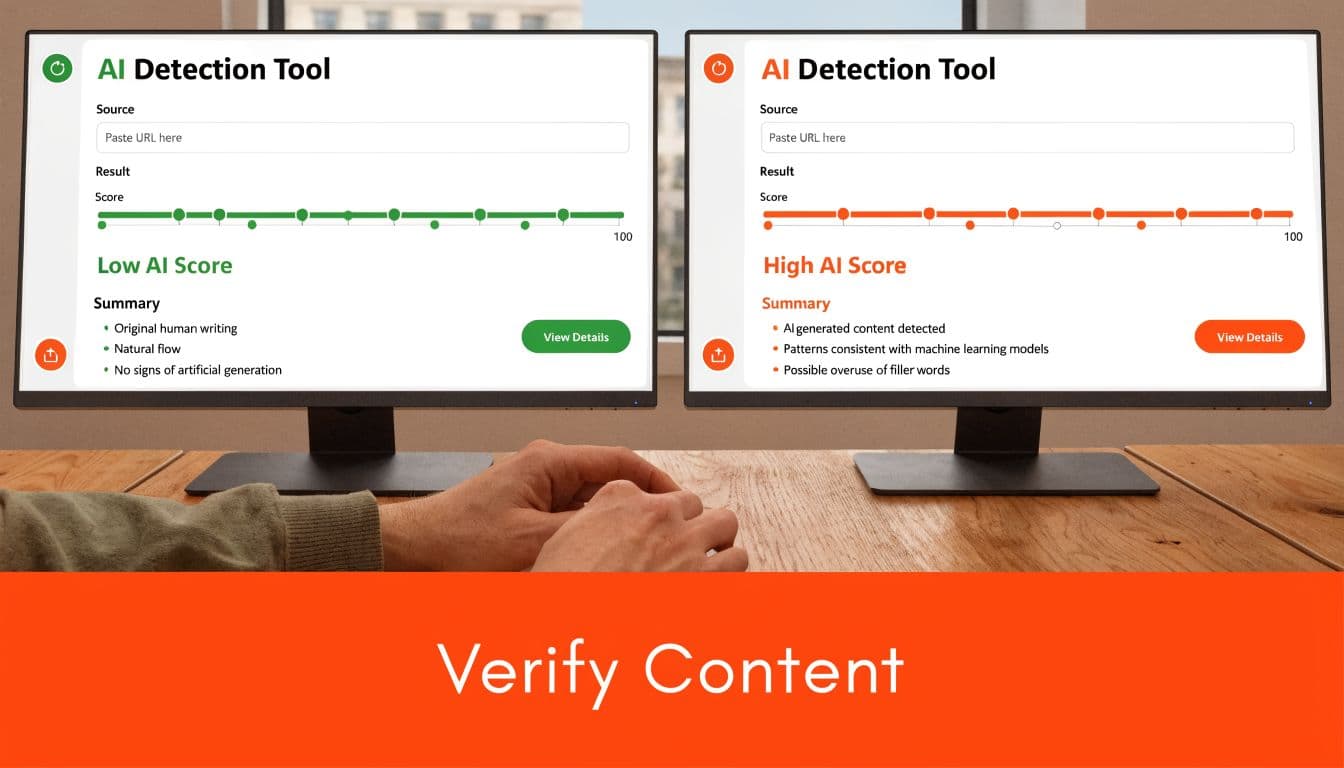

Relying on one detector is a mistake. Different platforms score the same passage very differently, so a single green result can give false confidence.

Independent review testing makes that clear. In one set of experiments, text humanized from ChatGPT still got 100% confidence as AI from Originality.ai, while GPTZero improved from 100% AI-generated to 91% AI-generated, which shows why detector results need to be read comparatively, not as absolute truth (Originality.ai review of Undetectable AI).

What a multi-detector check actually does

It doesn't prove a document is "safe." It shows whether your text triggers the same pattern across different systems.

That's useful for two reasons:

-

Consensus matters more than one score

If three detectors react similarly, there is likely still a pattern problem in the text.

-

Conflict reveals edge cases

If one detector flags heavily and another doesn't, the issue may be phrasing style, content type, or model-specific pattern recognition.

A practical place to start is an AI detector that lets you screen drafts before you send them elsewhere. Use that as an early warning step, not as your only verdict.

How to read conflicting results

A lot of users panic when one detector gives a high AI signal after another looked fine. Usually that means one of three things:

The draft is still too even

This is common with cleaned-up business writing and essays. The content reads smoothly, but the rhythm never breaks.

The humanizer preserved meaning but not voice

Some tools are good at paraphrasing structure while leaving the text emotionally empty. The draft becomes different, but not authored.

The topic itself constrains variation

Technical, academic, and policy writing often has less room for expressive rewrites. In those cases, you need sharper examples and more deliberate sentence variation, not random synonym swaps.

If a detector still flags the draft, don't scramble the whole piece. Fix the passages that are most formulaic and test again.

A simple verification routine

Use the same routine every time so your results stay interpretable:

- Check the full draft first to catch broad pattern issues.

- Retest only the flagged sections after edits.

- Compare detectors, not just scores.

- Read the text aloud before the final test because awkward phrasing often predicts detector trouble.

This step matters more than many realize. A draft can look natural on a fast read and still carry the statistical fingerprints detectors are built to catch.

Long-Term Strategy and Ethical Use of AI Humanizers

The short-term goal is to reduce obvious AI signals. The long-term goal is better writing.

Those are related, but they aren't identical. If your process is built only around evasion, it will break as detectors adapt.

Current reviews already point to that problem. No humanizer offers 100% undetectability, and GPTZero's review notes that detectors are increasingly targeting mechanical rewriting patterns. It also notes that since November 2024, Forbes rankings excluded Undetectable AI despite its claims, which signals how quickly the evaluation environment is shifting (GPTZero review on long-term detectability).

What holds up over time

The most durable workflow is the one that improves the writing itself.

That means:

- Using AI for draft acceleration, not for final authority

- Adding genuine perspective instead of cosmetic synonym churn

- Reviewing for clarity and meaning before chasing lower detector scores

- Keeping a quality standard that would make sense even if detectors disappeared tomorrow

This matters for ethical reasons too. Helping a non-native speaker produce smoother English is one thing. Passing off machine-written academic work as personal authorship is another.

A useful baseline is to treat humanizers as editing support, not identity replacement. If the final text doesn't reflect your understanding, your judgment, or your responsibility for the claim, the workflow has crossed a line.

For teams, the better question isn't "Can we still beat the detector next month?" It's "Can we publish text that sounds credible, accurate, and on-brand even as detectors change?"

That's the more stable strategy, and it's the one behind responsible use policies like Lumi Humanizer's guidance on responsible use.

Frequently Asked Questions

Can humanizing change the meaning of my text

Yes, it can. This happens most often when the tool rewrites technical language, academic qualifiers, or brand-specific wording.

The fix is simple. Compare the humanized version to the original line by line for claims, tone, and emphasis. If a sentence became softer, broader, or oddly specific, rewrite that sentence manually.

Should I humanize the whole draft at once

Usually, no. Large drafts are harder to control, and it's easier to miss places where the meaning drifted.

A better approach is to process content in manageable sections, then review those sections in context. That also makes it easier to isolate the paragraphs that keep triggering detectors.

What should I do if the text sounds unnatural after humanizing

Don't run it through more rewriting tools right away. That often makes the problem worse.

Instead:

- Find the awkward sentence rather than reprocessing the whole piece

- Replace strange synonyms with plain language you would use

- Restore natural cadence by mixing short and medium-length sentences

- Check grammar at the end so cleanup doesn't flatten the voice

Is paraphrasing the same as using an undetectable ai text humanizer

No. Paraphrasing changes wording for clarity or variation. Humanizing aims to make the writing sound more like a person wrote it.

Sometimes you need both, but they solve different problems.

Can detector scores guarantee safety

No. Detector scores are signals, not promises.

Use them to find obvious issues, compare outputs, and guide revisions. Then judge the text as writing, not just as a score report.

If you want a cleaner workflow, try Lumi Humanizer to rewrite AI text into more natural language, then verify the result with your own editing pass and detector checks. If you're comparing plans for regular use, the pricing page is the next practical step.